SH4 in Compiler Explorer

Thanks to the effort of Matt Godbolt (who hilariously enough is a former Dreamcast developer himself), the SuperH GCC toolchain is now available for use with Compiler Explorer, along with all of the SH4-specific compiler flags and options typically used when targeting the Dreamcast. This gives us an invaluable tool for getting quick and immediate feedback on how well a given C or C++ source segment tends to translate into SH4 assembly, offering a little sandbox for testing and optimizing code targeting the Dreamcast.

Configuration

To arrive at a configuration mirroring a Dreamcast development environment, first select one of the GCC compiler versions for the SH architecture. Secondly, the following compiler options should be used as the baseline configuration:

-ml: compile code for the processor in little-endian mode- FPU Mode:

-m4-single: generate code for the SH4 with a floating-point unit that supports both single and double-precision floating point arithmetic that defaults to single-precision mode upon function entry-m4-single-only: generate code for the SH4 with a floating-point unit that only supports single-precision floating point arithmetic

-ffast-math: breaks strict IEEE compliance and allows for faster floating point approximations-O3: optimization level 3-mfsrra: enables emission of the fsrra instruction for reciprocal square root approximations (not available in GCC 4.7.4)-mfsca: enables emission of the fsca instruction for sine and cosine approximations (not available in GCC 4.7.4)-matomic-model=soft-imask: enables support for C11 and C++11 atomics by disabling then reenabling interrupts around atomic variable operations-mtas: Backs C11's atomic_flag type by emitting the tas.b instruction, which atomically tests and sets the flag's value, also causing a purge of the cache line it lies within.-ftls-model=local-exec: enables the model used by KOS for supporting variables declared with the "thread_local" keyword

Convenience Templates

The following are pre-configured templates you can use as sample Dreamcast build configurations:

- GCC4.9.4:

- GCC9.5.0:

- GCC12.2.0:

- GCC13.1.0:

- GCC13.2.0:

- GCC 14.1.0

- GCC 14.2.0

- GCC 15.1.0

- GCC 15.2.0

Pre-configured template for ARM GCC8.5, Dreamcast's "AICA" sound chip:

Tips and Notes

- It has been noted that while

-O3is claimed to be the highest optimization level according to recent GCC documentation, some code differences can still be seen under certain circumstances when using-O4and beyond. - The compiler seems to ignore both

-mfsrraand-mfscawithout the-ffast-mathoption. - With the proper flags enabled for fast-math, the compiler is smart enough to leverage the following from pure C code, almost certainly better than you can do with small intrinsic-style inline ASM calls, provided you're using the proper single-precision versions of any

<math.h>routines:

| Assembly Output | C/C++ Input |

|---|---|

| FMAC | z = x * y + z

|

| FSCA | s = sinf(angle); c = cosf(angle)

|

| FSRRA | 1.0f / sqrtf(x)

|

- Unfortunately the compiler has no knowledge of the following SIMD instructions, even with fast-math, so it's quite necessary to use inline assembly routines (provided by KallistiOS) for fully leveraging the SH4's FPU, when working with vectors and matrices in linear algebra routines:

| Assembly Output | C/C++ Input |

|---|---|

| FIPR | Vector4 Dot Product

|

| FTRV | Vector4 * Matrix4x4 Transform

|

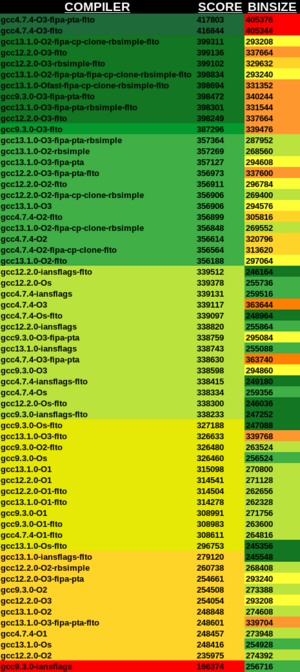

- Typically smaller code sizes and more tightly optimized code are seen with newer versions of GCC versus the older ones; however, this is not always the case.

- Evidently, even without a branch predictor, the C++20

[[likely]]and[[unlikely]]attributes as well as the GCC intrinsic__builtin_expect()can have a fairly profound impact on code generation and optimization for conditionals and branches. More information can be found here. -fipa-ptaallows the compiler to analyze pointer and reference usage beyond the scope of the current compiling function, which very often results in pretty decent performance increases at the cost of increased compile times and RAM usage.-fltoallows GCC to perform optimizations over the entire program and all translation units as a single entity during the linking phase, for the cost of increased compile times and RAM usage. This frequently results in more performant code.- An in-depth benchmark comparing the run-time performance and compiled binary size output of every toolchain version officially supported by KOS with various optimization levels can be found here.